Some old gems I picked up at Agile 2010 from “Agile Test Automation Strategy for the non-Technical” by Gerard Meszaros (Author of xUnit Test Patterns).

These are still as relevant today as they were 5 years ago.

First, there are 2 critical definitions to provide context…

- Legacy code: Code that does not have automated unit tests.

- Legacy products: Products that contain legacy code.

However – a legacy product does not have to contain only legacy code.

Now, considering the differences between legacy product & legacy code think about your work on “legacy products”.

- If you are adding new code, where do you add it and what testing do you do?

- If you are modifying legacy code, how do you treat it and what testing do you do?

Here’s a quick refresh of the testing pyramid concept…

The (grossly simplified) ideal world view is that you have a large number of automated (fast) unit tests as a safe platform upon which you build a suite of automated and manual functional tests and at the peak you have your broader system tests.

Most legacy products and systems have this pyramid inverted with heavy investment in system test and UI automation, insufficient small functional tests and few if any unit tests.

Great. So What?

Stop trying to “Correct” the testing pyramid.

Rather than wasting our constrained capacity trying to automate everything, we must understand that legacy products have a testing “sweet spot”.

Focus testing and test automation strategy and investment on the areas of highest risk and highest ROI.

Even at the uppermost level, automate the things that steal most of our time first. This frees us up to do more value-added work.

Do not try to retrofit unit testing into legacy code.

To paraphrase Gerard, I distinctly remember him saying “Don’t waste your time unit testing legacy code”.

Instead, invest in the middle layer – the small automated functional tests.

This is because for the volume of code you are working with and its maturity, small functional tests will provide you a far higher return on investment than the same effort spent unit testing legacy code.Given this is from the guy who literally “wrote the book” on unit testing this surprised me at first. It all becomes clearer in the next 2 points he makes!

(This is where the difference between legacy code and legacy product is key)

If you’re adding new code to a legacy product, unit test it.

Add a procedure or method call from the legacy code to the new code and then treat the new code as independent new code.

This practice also encourages better coding & architecture practices with greater readability.

Whilst the ROI on retrofitting unit tests to legacy code is low, the unit test effort for new code is recovered in prevented defects, less review issues, faster build & test feedback and simplified code maintenance.

Legacy code modifications should be refactored into new code.

If you are modifying legacy code, use good refactoring patterns to turn it into new code.

Now it’s new code, it’s small, unit test it!

The “Extract Method” refactoring pattern is of particularly high value here. Essentially you have a fragment of code within a method to fulfil a particular purpose. Pull it out into its own short well-named method and treat it as new.

Avoid merciless or repeated refactoring.

This point came from Naresh Jain during his time working at Industrial Logic but fits here…

Refactoring is a discipline, don’t get carried away.

Understand what refactoring really means, study the patterns involved and use them with caution.

Wholesale rewrites are not refactoring.

I’d argue that all developers will work on legacy code in their careers so I strongly recommend a complete read of these…

- “Working Effectively with Legacy Code” – Michael Feathers

- “Refactoring – Improving the Design of Existing Code” – Martin Fowler et al.

- There’s also a third – “Refactoring to Patterns” by Josh Kerievsky (I’ve been told it’s good but I’ve still not read it so I can’t personally recommend it – yet).

There’s also a great cheat-sheet on code smells to refactorings here.

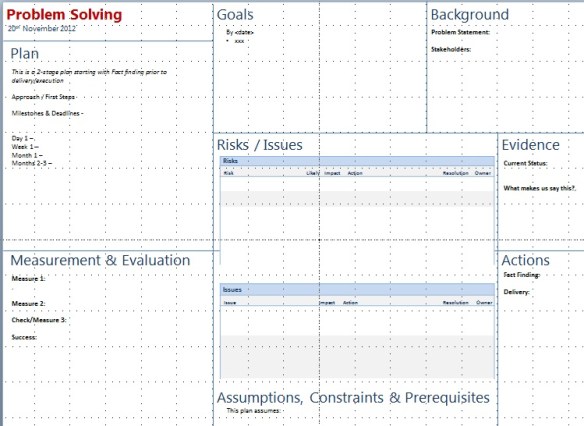

Without any reading at all, the 3 strongest options stood out. Better still, this technique didn’t need anything more than expertise and instinct. I’m free to follow up with further research and evidence if needed but at that point we were after a first-cut and a quick narrowing of options to focus our efforts.

Without any reading at all, the 3 strongest options stood out. Better still, this technique didn’t need anything more than expertise and instinct. I’m free to follow up with further research and evidence if needed but at that point we were after a first-cut and a quick narrowing of options to focus our efforts.

It’s still doing the rounds on twitter 2 weeks later so if you’re here because of “that photo”, thanks for stopping by and don’t forget to congratulate Alberto! Sadly most of the content on here – whilst hopefully interesting and insightful – probably won’t have the same mass-appeal. Back in the day-job, I have 3 irons in the fire at the moment.

It’s still doing the rounds on twitter 2 weeks later so if you’re here because of “that photo”, thanks for stopping by and don’t forget to congratulate Alberto! Sadly most of the content on here – whilst hopefully interesting and insightful – probably won’t have the same mass-appeal. Back in the day-job, I have 3 irons in the fire at the moment. The team’s best run so far without a broken build or failing tests was a week but this time when things failed, they were fixed and re-tested in under an hour, not left for the next “overnight”.

The team’s best run so far without a broken build or failing tests was a week but this time when things failed, they were fixed and re-tested in under an hour, not left for the next “overnight”.

As more teams and companies are adopting lean concepts and with the strong influence of the Lean Startup movement (which to reduce confusion is not the same as lean), this post feels relevant to finally publish publicly…

As more teams and companies are adopting lean concepts and with the strong influence of the Lean Startup movement (which to reduce confusion is not the same as lean), this post feels relevant to finally publish publicly…